Have You Been Workslopped Yet?

AI is making you clean up your colleague's mess. Here's what should you do about it.

(Here for AI news? Scroll to the very bottom for 7 recent AI headlines you should know about.)

AI slop takes itself to the office

There’s a new AI term making the rounds and I’m utterly delighted by it: workslop.

Oh, how it rolls off the Schadenfreudean tongue! Oh, how I wish I’d coined it!

Alas, not I. Researchers at Stanford and BetterUp landed this gem on Harvard Business Review just last week, a fine pairing to the AI slop ruining everything from text to video to music to your toddler’s potty training.*

If you have not yet encountered AI slop, let me ruin your day for you. Behold Masters of Prophecy, one of the fastest-growing channels across all of YouTube. It went from a few hundred subscribers in February to over 36 million today. All of its music is AI-generated, dealing in quantity over quality. Curiously, it hopped from 300 subscribers to more than 100,000 in a single day without posting any new videos, shorts, or comments. Fishy much? Better yet, one of its videos (pasted below for scholarly purposes only) has over 100M views, which translates to $100k-$500k for the channel owner.***

Humans can’t compete with the internet-clogging speed and scale that AI offers. I guess I’ll pop a subscribe button below to help siphon off some of the indignation:

And now, back to work!

Workslop, that is.

You open a document from a colleague, it looks polished at first glance, but as you dig in, something’s off. It’s missing context. Some details smell a little off. The logic doesn’t quite connect. And then it hits you: someone just pointed an AI tool at the problem, copied whatever came out, and called it a day.

If this sounds familiar, congratulations; you’ve been workslopped.****

Workslop is AI generated work content that masquerades as good work, but lacks the substance to meaningfully advance a given task.

What Exactly Is Workslop?

The HBR article defines it beautifully: workslop is AI generated work content that masquerades as good work, but lacks the substance to meaningfully advance a given task.

The authors surveyed 1,150 US-based full-time workers, 40% of whom reported having received workslop in the last month. Among these recipients, how ugly is it on average?

Average workslop share: 15.4% of workplace content.

Primary vector: Mostly peer-to-peer (40%).

Level of ambition: Workslop is ever so slightly more likely to rise (to managers, 18%) than to fall (from managers/execs 16%).

Recipients felt: Annoyed (53%), confused (38%), offended (22%).

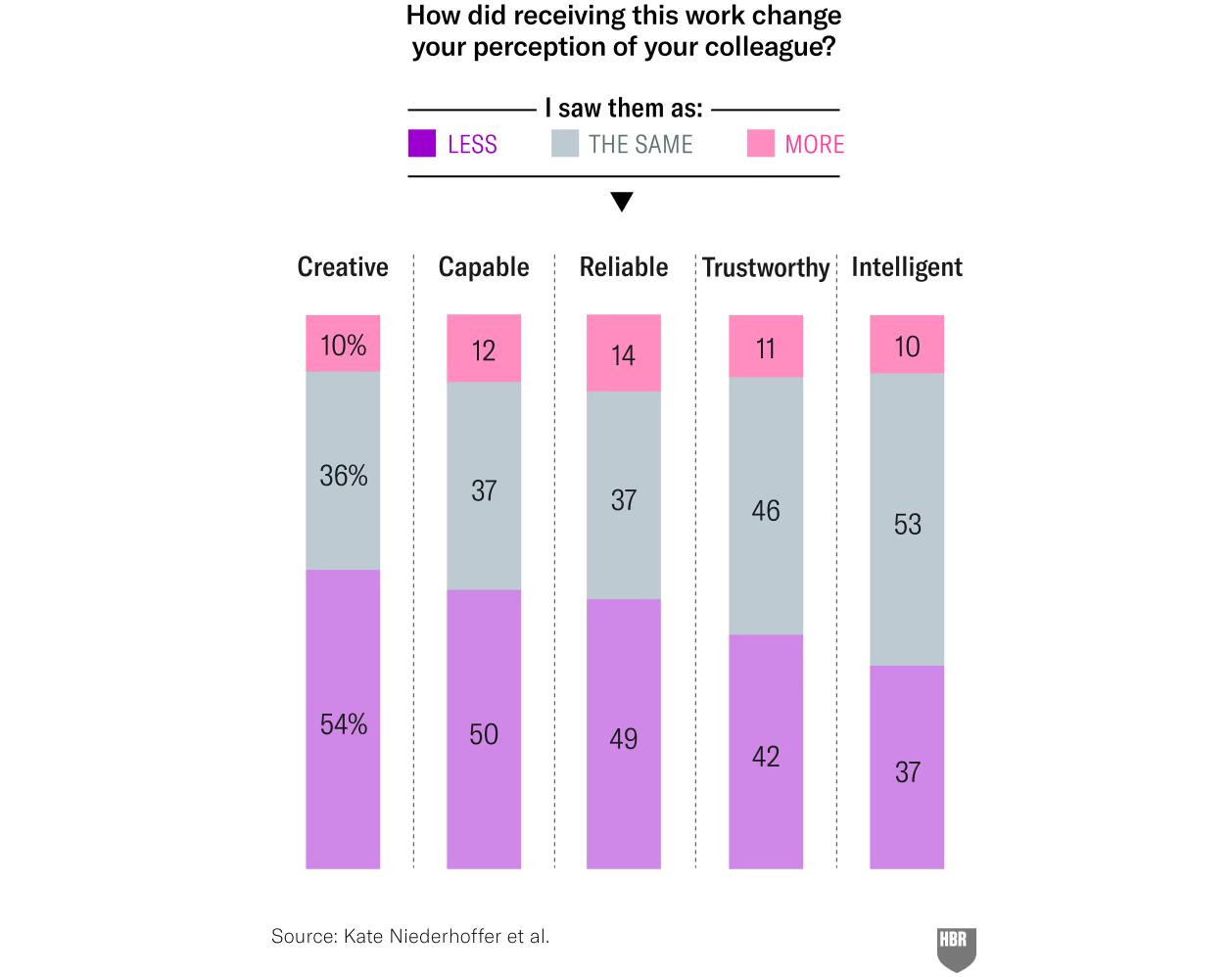

Trust eroded: Ouch (see the chart in the next section).

Invisible tax: 2h per instance, $186 per month per employee.

Industries most impacted: Professional services and technology.

Equal parts titillating and unsurprising to anyone who understands that artificial intelligence is no match for natural stupidity.

Don’t get me wrong, I’m a firm believer in AI’s transformative potential… when we use it to reach higher. But when we use it to disengage, workslop is inevitable. Indeed, every kind of disengagement breeds its own kind of slop. You can do plenty of damage by disengaging about AI, not only with it.

What does workslop really represent? It’s thoughtlessness, enabled by AI.

Workslop happens when we let AI take over the thinking. When we treat it as a shortcut instead of a tool. When we optimize for looking productive instead of being productive.

Workslop happens when we optimize for looking productive instead of being productive.

Thoughtlessness enabled

AI is the great thoughtlessness enabler. It removes the friction that used to slow bad ideas and forced you to take your brain off screensaver mode to attempt something tricky. Until recently, getting a solution meant you sweated over the problem. Now you can skip wiggling the proverbial mouse and still produce something that looks polished to an equally checked out observer. This is true of both GenAI, which lets you vibe work*****, and traditional AI, which lets you turn garbage data you never bothered to check the provenance of into functional enterprise solutions. (Please tell me you didn’t need me to hang this massive SARCASM BANNER here.)

Thought bypass surgery, without the surgery.

Unfortunately, thoughtlessness compounds. When no one double-checks, nonsense slips through as work. A single workslopped doc breeds more workslop. Your colleagues may mean well but the outputs they share may still be weak links riddled with problems hidden by polish. That creates a gap between good faith and reliable results — making the whole decision chain more fragile.

The cost of workslop isn’t just wasted time (an average of almost 2 hours lost per workslop instance in the HBR sample); it’s wrong turns taken with confidence.

According to HBR, every time you send workslop, half the people receiving it are downgrading their assessment of your competence. A third would prefer not to work with you in the future. It’s not just wasting time. It’s eroding trust. It’s damaging relationships. It’s degrading the quality of collaboration across your entire organization.

How to be the solution, not the problem

If AI enables thoughtlessness, it’s on us humans to re-inject thoughtfulness back into the process. Think of AI as a way to redistribute thoughtfulness towards your priorities, not as a way to skib** it.

That sounds simple, but simple and easy are not the same thing. AI makes thoughtlessness incredibly easy. That’s not going to change. So we need to make thoughtfulness an intentional practice.

Before you press that button, ask yourself:

Value: Is this important? Does it matter if it’s wrong?

Context: What specifically do I need here? What context is essential?

Risk: What could go wrong if key details are missing?

Check: What am I expecting to see? How would I verify this output?

If your task is important and your answer is “I won’t,” then stop right there.

After the AI gives you something, ask yourself:

Does this actually meet my needs?

Have I checked it enough?

Would I stake my reputation on it?

Because you are. You absolutely are.

Leaders, your slop is so much grander

As more leaders hop on the “AI everywhere, all the time” bandwagon, brace yourself for… grandslop. Those AI mandates sound great in theory. In practice, they’re exponentially thoughtless.

You know what those dramatic ROI failure stats****** tell me about AI? That the problem is one of leadership, not technology. Leaders asleep at the wheel will use AI to burn budgets. Leaders with clear vision will use AI to define the next era of business.

Leaders with clear vision will use AI to define the next era of business.

For a proper dressing down, head over to HBR. I’ll keep it short here:

Wise leaders are those who model thoughtful AI use. Let your people see you thinking critically about AI outputs. Set clear guardrails — not to restrict, but to guide. Champion AI literacy and reward quality over velocity. I know that’s hard in a world that’s moving fast, but as they say: more haste, more slop.

How you lead will determine whether your people use AI to shirk or to supercharge.

Isn’t the irony rich?

Your organization adopted AI to save time. And now HBR is telling you to budget two of your precious hours towards cleaning up each instance of someone else’s careless AI use.

How about we all do our part to start turning that trend around? Next time you’re tempted to copy-paste some AI output directly into your deliverable, pause. Ask yourself: am I using this AI tool to reach higher? Or am I just being lazy? Am I creating work? Or am I creating workslop?

Because the world has enough slop already.

Thank you for reading — and sharing!

I’d be much obliged if you could share this post with the smartest leader you know.

I recently taught a pilot of an everyone-friendly Decision-Making with ChatGPT course, so I’m seriously considering offering a 3h live virtual session (2h theory + 1h AMA) on Maven. Let me know if you’d be interested in taking it:

In other news, the first few cohorts of my Agentic AI for Leaders course were a triumph and we’ve opened enrollment for an additional cohort to meet demand.

The course is specifically designed for business leaders, so if you know one who’d benefit from some straight talk on this underhyped overhyped topic, please send 'em this link: bit.ly/agenticcourse

🎤 MakeCassieTalk.com

Yup, that’s the URL for my public speaking. “makecassietalk.com” Couldn’t resist. 😂

Use this form to invite me to speak at your event, advise your leaders, or train your staff. Got AI mandates and not sure what to do about them? Let me help. I’ve been helping companies go AI-First for a long time, starting with Google in 2016. If your company wants the very best, invite me to visit you in person.

🦶Footnotes

* Concerned parents told Reddit that their toddler “refuses to use the toilet now due to skibidi toilet.** Whenever we try to put him on it he screams and refuses to go anywhere near it. We’ve tried explaining that Skibidi Toilet isn’t real and our toilet is completely safe, but it seems like it’s too overwhelming for him.”

** Skibidi toilet is an AI slop video (screenshot below to give you a sense of this High Art), that’s also the etymology of that slang your tween loves to irritate you with.

*** AI-generated slop is surging on YouTube... and its algorithm appears to favor AI over humans now. What makes bot inflation worth it for YouTube? AI lowers costs to cents per video while keeping quality “good enough.” If advertisers don’t object, YouTube may quietly let AI take over, leaving human creators sidelined until users suddenly notice: “there aren’t any humans on it anymore.” Your attention is valuable, so spend it wisely. The internet becomes what you make it.

**** HBR may have birthed the noun, but I… I shall raise the verb to be a fine participle.

***** Runner-up prize for second best neologism of the week goes to Microsoft, which just launched “vibe working” (its agent mode for Excel and Word). A gerund, how fancy!

****** 95% of organizations fail to see measurable ROI from GenAI. Details here.

🗞️ AI News Roundup!

In recent news:

1. Claude Sonnet 4.5 is here to work for 30 hours straight on complex coding tasks

Anthropic’s latest model works on complex tasks for over 30 hours — smoking the already-impressive 7h limit on OpenAI’s Codex — while setting records on real-world coding and computer-use tests. It also adds developer tools like checkpoints, code execution, and a VS Code extension, plus a new Agent SDK and stronger safety controls.

2. OpenAI debuts video generator Sora 2

Sora 2 makes a leap from Sora 1’s janky prompt-matched clips to physics-aware, persistent video with synced audio. The new iOS app (rolling out for US and Canada this week) adds cameo avatars, consent controls, and remix tools.

Meanwhile, Google DeepMind released a nifty paper showing that their video model Veo 3 can solve a wide range of visual tasks in a zero-shot manner, from perception and physics modeling to manipulation and reasoning, suggesting that video models are on track to become general-purpose foundation models for vision, much like LLMs transformed language understanding.

3. Accenture tells its staff to get AI literate or get lost

Accenture plans to lay off staff unable to reskill on AI as it doubles down on its restructuring, investing $865 million in a 6 month business optimization program. If your company is considering similar changes, there are humane ways and harsh ways to handle them. I hope you’ll pick the better option. Feeling lost? I’ve helped organizations build AI literacy and reskill workforces for over a decade, starting with Google’s AI-first shift. To get started, request a workshop at makecassietalk.com (flavors include board of directors / managers and execs / general staff — let me know what you need); it’ll cost you much, much less than $865 million.

4. Musk’s xAI undercuts rivals with federal deal to sell Grok chatbot for 42 cents per use

xAI secured an 18-month U.S. government contract letting executive agencies use Grok at less than half the $1 per-use price of OpenAI and Anthropic. The package includes integration support from xAI engineers, despite prior controversies over Grok generating offensive content. Some estimates put the cost of training Grok 4 at $490 million, underscoring the scale of investment behind the discounted entry into federal procurement.

5. Alibaba drops flurry of AI upgrades, including lip-reading live translator

Alibaba introduced Qwen3-Omni, its omni-modal model for text, images, audio, and video with real-time streaming, 119 language coverage, and benchmark wins over GPT-4o and Gemini-2.5 Pro. The launch also included Qwen3-VL (vision and language), Qwen3-Max (reasoning and coding), and Qwen3-LiveTranslate-Flash (translate with gesture recognition and lip-reading… in case you thought those distant cameras can’t hear you). Go big or go home!

6. Study suggests GenAI will affect tech jobs more than other sectors

An analysis by Indeed indicates tech roles face the deepest AI-driven changes: over half the skills in tech jobs could be transformed by generative AI (nearly 3 in 5 fully automatable skills are tech-related). Meanwhile, another report finds that 90% of software developers are using AI at work, with 65% “heavily reliant” on it.

7. MIT team teaches AI to design crystal patterns that could power better quantum computers

MIT and collaborators built a tool called SCIGEN that guides AI to create the rare crystal shapes most likely to unlock new quantum materials. The system generated over 10 million options, with supercomputer tests showing thousands could have unusual magnetic traits. Two new compounds were successfully made in the lab, pointing to a faster path toward more stable qubits.

Forwarding this email to a friend or sharing it on social media goes a long way to encouraging my writing. Thank you thank you!